Search engine optimization (SEO) is completely necessary to stay afloat nowadays.

With 85% of consumers using the internet to find local businesses, having a strong online presence is vital.

To improve your SEO ranking, your website has to be fine-tuned to thrive online.

This process is called technical SEO.

Technical SEO is simply the process of calibrating a website to rank online.

You can’t just create a website and hope for the best.

It has to be configured by a professional for it to do any good online.

Do you want to learn more about how you can leverage technical SEO in 2020?

This guide will tell you all you need to know about the subject.

All About Website Audits

A website audit is a comprehensive diagnostic test of your site’s status.

It evaluates your website’s readiness to rank online and points out several critical errors that are stopping your site from reaching its full potential.

Web agencies perform website audits all the time to get down to the bottom of their clients’ issues.

This helps them to render effective solutions that will improve their website’s SEO potential.

Gone are the days when you have to do all the guesswork in figuring out what’s wrong with your website.

Having a website audit performed will give you all the answers on how to move forward with its maintenance.

Once you receive your website audit, you can continue with optimizing your website by keeping an eye on important technical SEO tasks.

We’ll discuss all of these tasks in greater detail below.

Technical SEO 2020 Checklist

Based on the results of your website audit, you can cite the following information to learn more about how you can improve your website.

Responsiveness:

Ideally, your website should be responsive on every platform, from desktop computers to mobile devices.

Google can even punish your website if it isn’t optimized for all digital platforms.

This is often a common problem for a lot of online businesses.

Therefore, make sure your website works seamlessly on all platforms people use to search, especially mobile devices.

Website Speed:

How long do you think a website should load?

It should take between two and five seconds for your website to load.

Though, most web users expect a website to load within two seconds.

Now, here’s the kicker.

Visitors will generally spend less than 15 seconds on your website.

That’s right, only 15 seconds…

If your website takes too long to load, you could lose qualified leads by the day.

With that said, do you know how fast your website loads?

You can use Google’s free website speed tool to find out.

Configuring Robots.txt:

Robots.txt is a file used by websites to interact with web crawlers and other bots, also known as a robots exclusion protocol.

Since web crawlers are essential to scan and index your site, setting up this file is very important.

This file is also integral in defining specific areas these robots are allowed to crawl and restricted areas.

Configuring Sitemap.xml:

A sitemap.xml file makes it convenient for Google, Yahoo, Bing, and other search engines to quickly crawl your website.

This file is pretty self-explanatory, being that a “sitemap” is the infrastructure of your entire website.

Search engines use these files to identify different posts and pages on your site.

If you are using the WordPress plugin, Yoast SEO, then you should already have a sitemap as an XML file.

Otherwise, you will have to download and install a Google XML sitemap plugin.

Navigating 404 Errors:

You’ve likely seen a 404 error after clicking a broken link.

A 404 error happens if there isn’t any content available on a given URL.

It’s basically a real-life brick wall on the internet.

404 errors are almost always accidental.

You can run into these errors by even mistyping a URL.

You have the option to create a custom 404 error page whenever this issue occurs.

However, the point is reducing these errors so that web visitors and crawlers can navigate through your website without any hassle.

Fixing Broken Links:

Broken links are frankly embarrassing.

It’s challenging enough to attract qualified leads to your website.

Having them click on a broken link will lead them to quickly navigating to the close button.

To improve the user experience, you should quickly identify broken links on your site periodically.

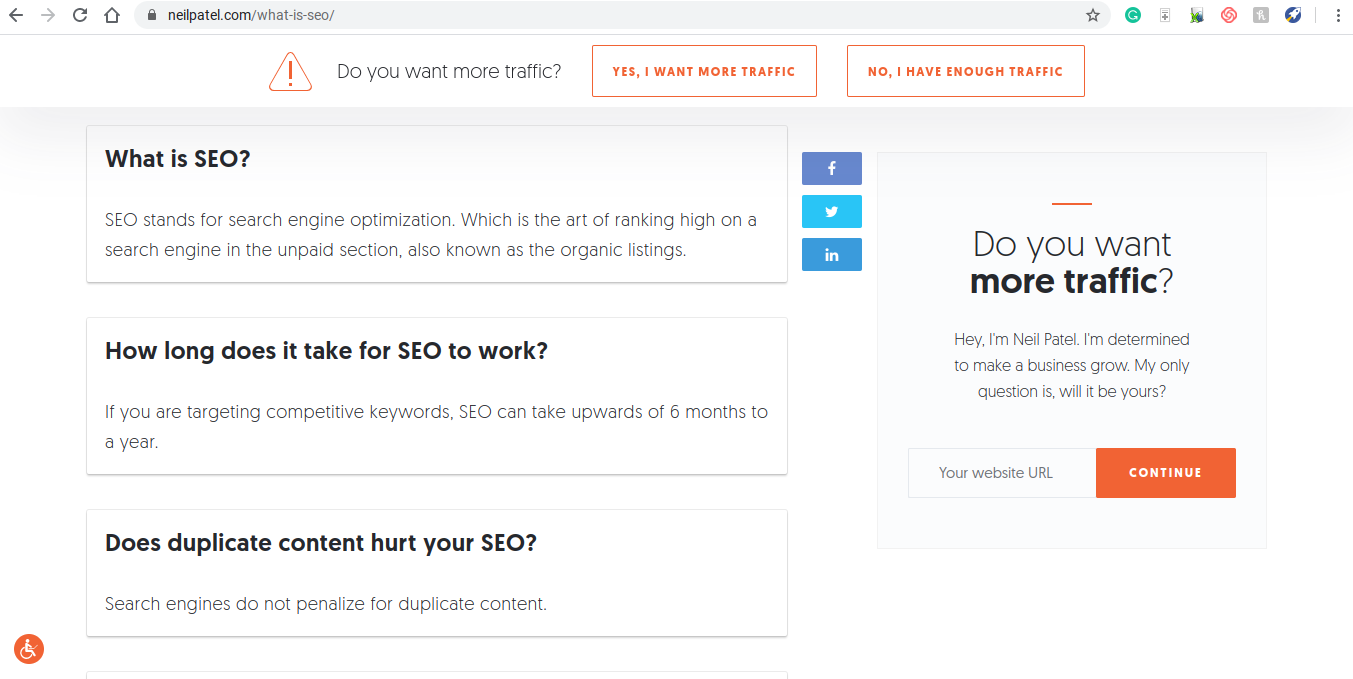

Data Structure:

Don’t worry…

Data structure isn’t a complicated task like all the rest we’ve just mentioned.

It’s simply the way your content is structured on your website.

Structured data tells search engines about all of the data on your website.

For example, including a FAQs section at the bottom of a blog like Neil Patel did below is a great way of receiving a rich snippet.

A small tweak like this can result in thousands of website visitors.

Meta Title and Meta Description:

A meta description is a small amount of text that appears just under a web result.

A meta title is the header tag telling what the entire web page is about.

Optimizing both tags can improve your site’s click-through rate (CTR).

Make sure you are adding primary keywords in your meta title and description to ensure that it shows up in diverse searches.

Also, create irresistible meta titles potential customers won’t help to click on.

Image Optimization:

Images play a pivotal role on every website.

Though, you have to understand that an image is data and crawlers don’t necessarily have eyes to see what the image is about.

Reducing the file sizes of your images, adding alt text, and other tasks will prepare them to be identified and indexed by web crawlers.

Need Help?

Whew…

We just went over a lot of information.

If you’re not familiar with SEO, it may be difficult to understand any of this, much less put these tasks into effect.

Fortunately, we provide outstanding websites and perform all of these tasks so you don’t have to.

Sound interesting?

To speak to a member of our dedicated sales team, give us a call today at 1-888-678-8662 or click here to schedule a free consultation.

View Printer Friendly Version

View Printer Friendly Version